A personal letter on transformative AI

Making sense of rapid AI progress

George Rosenfeld is Deputy Director for Global Catastrophic Risks at Coefficient Giving, which covers our Navigating Transformative AI and Biosecurity funds. In April, he wrote a letter to his friends and family about why he thinks AI could transform the world in the coming years, and why the current trajectory worries him.

It’s a personal letter, written for people who are encountering these arguments for the first time. We’re sharing it because we think more people should be having these kinds of conversations.

Note: this letter was shared at the start of April. In the roughly six weeks since, Anthropic’s annualized revenue has reportedly grown to $45 billion (up from the $9 billion figure in December 2025 cited below), and it announced the development of Mythos, a new powerful AI model that it claims was able to find security vulnerabilities in every major operating system and web browser when prompted. The letter doesn’t incorporate these developments, but AI progress continues at a blistering pace.

Dear family and friends,

For the past two years, I’ve worked at Coefficient Giving on the Navigating Transformative AI program. For the most part, our work involves thinking about what might happen with AI over the coming years, trying to get a sense of how powerful and transformative it might become, and doing whatever we can to help make the world more prepared.

The sorts of scenarios my team thinks about sound pretty extreme and sci-fi. We think about worlds where AI systems are able to do practically everything humans can do, just faster and better; where decades of scientific progress start to happen in months or years; and where we’re asking ourselves not just what AIs can do, but whether humanity can remain in the driver’s seat at all.

It’s hard to overstate how completely wild these possibilities are. If these outcomes are even somewhat plausible, then we may be living through one of the most important turning points in human history.

I don’t know for certain whether any of these things are going to happen. I also don’t know whether these things would be very good (think: unprecedented human flourishing), or very bad (think: all humans dead or disempowered), or somewhere in between.

I should also be upfront that these views are not mainstream.1 The idea that AI could automate nearly everything and transform civilization within a few years is a minority position, and one that most economists, policymakers, and people in general aren’t taking seriously yet. It certainly doesn’t feel like the world is awake to these possibilities.

I was personally skeptical for a long time too. When I first encountered these arguments, they sounded sci-fi and far off, and I took a while to come around. But over the last couple of years, my more conservative predictions have consistently underestimated how much progress AI would make, and I’ve become increasingly convinced – and increasingly worried — that these scenarios are plausible, and that they might not be far away.

I wasn’t sure whether to share this, whether it would even be a good thing for people to read. Although I’m uncertain, my best guess is that the world is on the cusp of changing radically — that we’ll look back at this period like we looked back on February 2020 before COVID-19 changed everything,2 except this time the transformation will be much more drastic and the world may never go back to how it was before.

These are real views that I live with, and that increasingly guide my emotions and decisions and hopes and fears. It feels strange, and lonely, to hold them and not share them with people I care about.

In what follows, I’ll write about (1) why powerful AI could be such a huge deal, (2) how quickly we could reach that point, and (3) what unprecedented risks — and benefits — transformative AI could introduce. I also link to further resources where you can learn from people much more knowledgeable than me.

Why is AI a big deal?

Most of us interact with AI as a chatbot, an online interface that answers our questions, plans our holidays, and occasionally does our homework.3 Sometimes it feels helpful, other times useless. Either way, it doesn’t necessarily feel that ground-breaking or that different to other technologies that humans have invented.

One key difference is that unlike previous technologies, it might be possible for AI to automate everything that humans can do. Previous technologies have always been a complement to humans in some way. The printing press let us spread ideas faster, but someone still had to have the ideas. Computers can calculate faster than we ever could, but they only do what we program them to do.

AI, by contrast, could plausibly do everything that humans can, including the open-ended thinking that used to be exclusively ours. We can’t be certain that AI will be able to reach this point, but if it does it would likely be a huge deal.

For starters, there go our jobs. Not in the historical sense of: AI automates a particular portion of human labor, so the humans reskill and move on to different jobs, as has happened many times before.4 But plausibly: AI is now better at doing basically any job than even the best humans, so there’s no other job to move on to.5 This may currently be easiest to imagine with cognitive work, though physical labor could follow. In that world, it’s unclear how most humans would earn a living, or what the economy would look like.

But job loss, as dramatic and world-changing as it could be, might not even be the most important part. Up until now, human labor has been a significant bottleneck to both scientific and economic progress. Yet with sufficiently powerful AI, we may have the equivalent of billions more top scientists, entrepreneurs, and other experts to throw at every problem at once.6

It’s hard to know exactly how fast things could move. Even with superhuman AI, there would still be bottlenecks: physical experiments take time to run, many real-world problems are intrinsically messy and complex, and human institutions, regulations, and adoption could all lag behind.7

But it’s plausible that such a world could quickly become unrecognizable from today’s, with hundreds of years of progress compressed into months or years.

Think about how different the world looks now compared to 1500: modern medicine, electricity, the internet, nuclear weapons, space travel. Now imagine that scale of change happening not over five centuries, but over five years. And unlike the generations who lived through the original transformation, we wouldn’t have centuries to adapt.8

Where are we now and where are things headed?

As of March 2026, AI hasn’t transformed the world and it hasn’t automated that many jobs. I don’t yet find it very useful for my work.

But what matters is the trend.

AI systems have already improved dramatically over the last few years. In 2022, the first widely available chatbot (ChatGPT) could hold a passable conversation but often made things up. By 2024, the best AI systems were passing the bar exam and getting silver medals in the International Mathematical Olympiad (gold followed in 2025). In early 2026, AI agents can often handle substantial software projects that take skilled humans several hours,9 and are now being used to accelerate the process of AI development itself.10

To understand what’s driving these improvements, it helps to get a sense of how AI models are developed. When we build a car engine or a bridge or a website, we know what we want it to look like, and we understand exactly how and why the end product works. But AI is different: AI companies set up a neural network (a bit like an artificial brain), train it on enormous amounts of data, and let intelligence ‘emerge’ from the model. The engineers shape this learning process, but they don’t hand-craft the results.11

Over the last few years, one of the main drivers of AI progress has been scale: As AI companies scale up the inputs (more data, more computational power, larger models),12 they watch with the rest of us as smarter intelligence and new capabilities continue to appear. Many times people have bet against AI, saying it could never do some particular thing, only for the next round of scaling to prove them wrong. This pattern has held up repeatedly as models have gotten bigger, and nobody knows how fast it will continue or whether it will stop.

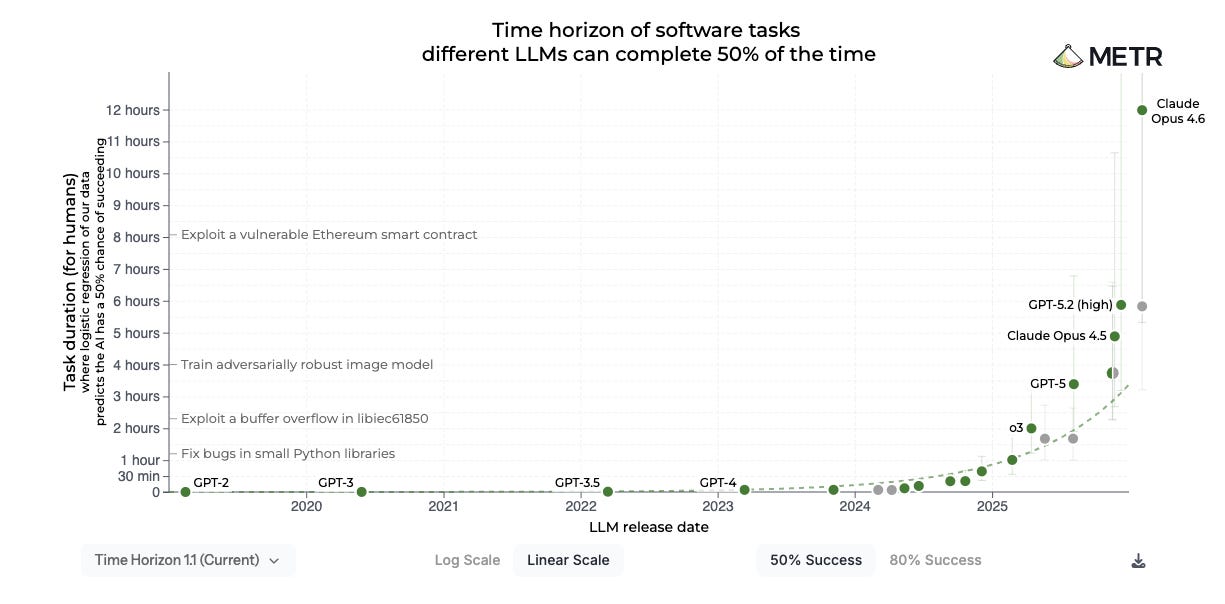

There’s a graph I keep looking at which captures this trend, from METR, an organization that evaluates AI capabilities. They measure the “time horizon” of AI systems: the difficulty of software tasks an AI can complete autonomously, measured by how long they take human experts.13 Between 2019 and 2025, METR found that time horizons doubled roughly every seven months. More recent data suggests it may now be doubling every four months.

As we learned during COVID, exponentials can change things fast: the doublings are happening quickly, and it may only take a few of them to transform the world.14

Another way to assess the trajectory of AI comes from looking at the revenue figures of AI companies, in some ways a more trustworthy indicator of real-world impact. Anthropic (the company that makes Claude) went from $100 million in annualized revenue at the end of 2023 to $1 billion at the end of 2024 to $9 billion by the end of 2025 — roughly 10x annual growth. By some measures, this makes it among the fastest-growing tech companies in history.

And the investment dwarfs even the revenue. The five biggest tech companies are on track to spend over $600 billion on AI infrastructure in 2026 alone, mostly on data centers and chips. To put that in perspective: the Apollo program to put humans on the moon cost about $250 billion (inflation-adjusted) across its entire 13-year lifespan. AI companies will spend more than double that in a single year. This is already one of the largest private investments in a single technology in modern history.

These figures alone don’t prove that AI is about to transform the economy; plenty of technologies have had explosive early adoption without changing civilization, and it’s possible the huge investment is a bubble. But combined with the capability trends above, it paints a consistent picture: either AI progress starts hitting a wall pretty soon, or it becomes the driving force of the global economy. It’s getting harder to bet on the wall.

Stepping back a bit: it’s all very well sharing graphs and trends, but when someone tells you there’s this new big thing on the horizon, it’s very reasonable to be skeptical. That was my initial reaction: I took a long time to come around to really believing that scenarios like these were plausible. But over time, the people who are extrapolating the trends and taking these possibilities seriously are looking increasingly correct, and my more conservative predictions keep being proven wrong.15

Now, when I look at what those people are predicting, they’re raising the alarm. In January, Ajeya Cotra (a former colleague of mine who recently ranked 3rd out of 413 people forecasting AI developments) predicted that AI would be able to complete 24-hour software engineering tasks by the end of 2026.16 A few months in, she’s changed her mind: She now thinks it will surpass 100 hours, and may be unbounded. In early March, she wrote: “For the first time, I don’t see any solid evidence we can extrapolate to say [full automation of AI R&D]17 won’t happen soon. AI R&D really could be automated this year.”18 In Ajeya’s view,19 we might expect AI to surpass the world’s best human experts at any computer-based task by the early 2030s, with automation of physical labor potentially following within a couple of years. Unfortunately, substantially sooner than that seems plausible too.

These claims sound wild and they could certainly be wrong. The trends could level off. The remaining capability bottlenecks could turn out to be more fundamental and/or harder to fix than they currently look. The world could wake up quickly and coordinate to slow down.20

But none of these things are happening yet, and the clock is ticking.

What about the risks?

Before I get into specific risks, a reminder of the general situation.

We may be on the cusp of building a second intelligent species21 that is smarter than us, can outcompete us in every plausible domain, and can be easily copied billions of times. We might expect this to kickstart an unprecedented explosion of technological progress that could transform the world far more quickly than we can keep up with it. And, if that wasn’t enough, this is all happening while we don’t fully understand how AI models work, how they might behave, or whether we’ll be able to keep them under our control.22 That all seems pretty worrying to me.

And indeed, many leading thinkers in the field are concerned. Two of the three ‘godfathers of AI’ who pioneered the modern field now argue that the sort of AI we might see in the next few years could be an existential threat to humanity.23 The CEOs of the major AI companies themselves say that there’s a real chance this technology could cause catastrophic harm. Dario Amodei, the CEO of Anthropic, has said he thinks there’s a 25% chance that things go “really, really badly”. Sam Altman, the CEO of OpenAI, has described the worst case as “lights out for all of us.”24

And yet they race.25

On my team, we focus mostly on reducing risks that could kill billions of people or be similarly bad (or worse) in other ways. These include:

Catastrophic AI misuse: Humans could deliberately use powerful AI systems to cause catastrophic harm, such as by designing and releasing engineered pandemics in ways that would be much harder or impossible without powerful AI. More on this here.

Extreme concentration of power: AI could enable a small number of actors — companies, governments, or even individuals — to accumulate a level of power unprecedented in human history, by gaining exclusive access to superhuman capabilities without relying on the support of humans who might otherwise resist. In the extreme, this could include full-blown coups by AI company CEOs,26 authoritarian lock-in by heads of state, and other scenarios that could lead to lasting political disempowerment for almost everyone. More on this here.

AI takeover: The most sci-fi risk of all. Many researchers are worried that AIs themselves might pursue goals that are different to ours once they’re smart enough to escape our control, potentially leading to humanity being disempowered or even wiped out entirely. Unfortunately, making sure that an AI that is smarter than us follows our goals is currently considered an unsolved technical problem – researchers may solve it in time, but there’s no guarantee. This type of risk is less intuitive than the others and requires deeper explanation — you can read more here; see also footnote above.

There are lots of other less doomsday things to worry about too: mass job displacement happening faster than society can adapt, loss of meaning as traditional sources of contribution disappear, extreme political and social instability, the disruption of institutions that can’t keep up.

Fortunately, I don’t think that any of these outcomes are guaranteed. And at least for the most catastrophic risks I listed above, my guess is that it’s more likely than not that we’ll avoid them. That said, the risks here still seem frighteningly high — likely greater than any other problem we face.

In my view, a sensible world would be mobilizing hard and coordinating to slow right down. Instead, we’re asleep at the wheel.

Not all bad

This focus on risks makes it sound like AI is all bad. I don’t think that is the case. On the contrary, if it doesn’t cause a catastrophe of some kind,27 I think that superintelligent AI could be one of the biggest forces for good in our history.

The extreme pace of scientific and economic progress that I described earlier could mean we solve scientific problems that would otherwise be out of reach, cure diseases,28 reduce poverty, give rise to material abundance beyond anything we’ve known, and expand human flourishing in ways that are hard to imagine today.29 The potential upside here is staggering.

I realize I just said the world should slow down and I’m now describing the enormous benefits of continuing on the current pace. These trade-offs are real.30 Every year we delay could be a year where people die of diseases that AI might have cured; yet every year we rush could be a year where we build something we can’t control. The stakes here could hardly be higher.

What can you do?

If you’ve gotten this far and are feeling overwhelmed — believe me, I get it. I’ve been sitting with these questions for several years and thinking about them almost every day, and I still get regular waves of stress, urgency, and sometimes even disbelief that this could really be happening.

If this all turns out to be right, the coming years may involve some of the most consequential decisions in human history: how this technology is developed, who controls it, and how fast we let it out. It’s quite remarkable that we happen to be alive at just the right time to influence how things go.

If you feel persuaded (or even intrigued) by some of these arguments, I’d encourage you to learn more, form your own views, and think about what sorts of actions might make sense if you become convinced that these things really could happen. Prepare to make your voice heard — whether that’s speaking to people you know, reaching out to a political representative,31 working more directly on these issues, or something else.

And if you’re wondering whether you’d have anything more to contribute: lots of people without any kind of technical background (including me!) have been able to switch into helping with AI quickly, and the range of useful work is broader than I’d expected.

As a starting point for digging deeper, I’ve included a list of the resources I’ve found most useful below.

I started this letter by saying that it’s felt strange and lonely to hold these views without sharing them. Writing this has helped with that, and I hope reading it has been worthwhile. I don’t know how the coming years will go, and it’s possible I’ll look back on this and cringe at how wrong I was. But if it’s even roughly right, I think we’ll wish we’d started paying attention as soon as we could.

George

Links to learn more

Here are some great resources to learn from people who know a lot more than I do.

Top recs:

AI in Context YouTube channel: really high-quality videos, focusing mostly on the risks

80,000 Hours problem profiles for power-seeking AI, concentration of power, and catastrophic AI misuse: among the best resources for understanding the risks

The same website also has this excellent resource for anyone interested in using their career to work on these problems

The AI Doc, or: How I Became an Apocaloptimist: new movie which just came out, a good and very watchable general introduction to the possible trajectories of AI.

Ajeya Cotra podcast episode: recent episode from Ajeya (who I mentioned a couple times above) about where we’re at as of early 2026

In general, I think the 80,000 Hours is the best podcast series on these topics

BlueDot Impact: an excellent set of free introductory courses and other resources for people looking to learn about these topics for the first time and start helping out.

Further reading/listening:

How not to lose your job to AI: article that does what it says in the title!

Most Important Century: excellent blog about transformative AI and why this might be the most important period in human history

AI 2027 by the AI Futures Project: detailed scenario of a potential AI take-off taking place in 2027 (this is sooner than I expect it, and sooner than the authors’ median guess, but still a helpful depiction of how things could go)

Planned Obsolescence: excellent Substack by Ajeya Cotra and Kelsey Piper on transformative AI, including The Costs of Caution (on benefits of AI) and Ajeya’s recent update towards shorter timelines

If Anyone Builds It, Everyone Dies: recent book by Eliezer Yudkowsky and Nate Soares, focuses on AI takeover risk, I think it’s over-confident in its conclusion (i.e. I don’t think a superintelligence would definitely kill everyone), but still a helpful description of some potential risks

Machines of Loving Grace and The Adolescence of Technology: essays written by Dario Amodei (Anthropic CEO) on the potential benefits and risks of powerful AI

If you’d like to do more

The letter above was written to share George’s perspective with people new to these issues. If you’re already familiar with AI risk and looking to take stronger action:

Explore a career pivot via 80,000 Hours’ AI careers page, the best starting point for thinking about working on these issues directly.

Donate to Longview Philanthropy’s Emerging Challenges Fund, which supports organizations working on AI safety and governance.

Apply for Career Development and Transition Funding through Coefficient Giving, which funds people transitioning into AI risk work.

So I’m told.

Just ask the 18th-century farmers, the 20th-century bank tellers, and many others.

Except if we require or have a strong preference that certain jobs have to be performed by humans, which seems plausible at least for some time, though not guaranteed.

Dario Amodei, CEO of leading AI company Anthropic, refers to this possibility as ‘a country of geniuses in a data center’.

Lots more on what may or may not be bottlenecked in Amodei’s ‘Machines of Loving Grace’. This piece by Epoch AI summarizes some of the strongest arguments for and against.

Holden Karnofsky, former CEO of Coefficient Giving, has an excellent blog series on how this could go (among other things) here.

Though they don't always succeed.

It’s worth noting that AI progress has been uneven. Models often do much better on formal, verifiable tasks like maths and coding, while still struggling in some situations that rely on common sense or contextual judgment. If you've tried a chatbot and come away unimpressed, that may be why, and it's a plausible reason to think that reaching the point of full human automation could take longer than some predict. That said, each generation tends to improve across multiple domains, and the domains where AI is currently strongest could be particularly important, since they may accelerate the process of AI development itself.

One implication of this is that we can’t confidently predict how AIs might behave in novel situations, including when they might at some point be more powerful than humans.

A bit like applying the same techniques to a bigger artificial brain.

The idea here is that as this time horizon gets longer, AI systems can take on increasingly substantial work. It’s not to be confused with ‘the length of time an AI can operate autonomously’, which is less relevant.

There are important limitations of this methodology. For starters, it mostly focuses on software engineering-related tasks, one of the areas AI is currently strongest (though also a particularly important area, since automating this could automate the process of further improving AIs). It’s also running up against a practical ceiling: it's expensive and time-consuming to create tasks at the 12+ hour level and pay engineers to complete them, which means we're running low on examples of things the best models can't do. Thirdly, it’s generally very difficult to translate scores on benchmarks like these into real-world impacts — it’s been notable that even as AIs have performed better and better on benchmarks such as this one, they haven’t yet made a huge mark on the world. Nevertheless, the direction of travel seems hard to dismiss.

You can also feel the ground starting to shift: companies are beginning to announce layoffs linked to AI automation, protesters are marching between AI companies in San Francisco demanding they slow down (a small group at least for now), and recently Bernie Sanders stood on the steps of Congress calling AI "the most sweeping technological revolution in the history of humanity". A year ago, almost none of this was happening, and this is still just the start.

Referring to the same METR graph I included above.

AI R&D is the process of developing new and better AI systems. AI researchers are particularly interested in the point at which this process can be automated because we might expect that the rate of AI capabilities improvement will grow even faster after that point, since the process will itself be driven by AIs rather than humans.

To be clear, she doesn’t think this is very likely to happen in 2026, but it’s the first time she hasn’t been able to rule it out.

Recorded before her latest update towards shorter timelines.

Not intended as an exhaustive list. As I mentioned earlier on, many people disagree with these predictions.

Upon reading this letter, some might reasonably argue that it’d be the first…

Recall from above that unlike many technologies which are designed and built by engineers who know exactly what they do and why, AI is ‘grown’ by putting a neural network through a training process, scaling it up, and allowing intelligence to ‘emerge’. This means that the designers of the models themselves are sometimes surprised by the behavior of their systems. It also makes it hard to be confident in the ‘intentions’ of AIs or how they’ll behave in novel scenarios, especially if/when they become smarter than us. Researchers have already produced controlled demonstrations in which frontier models behave differently depending on whether they appear to be in training or evaluation; there is also evidence that AI models sometimes engage in reward-hacking or deceptive behavior. These are not proof that today’s systems are uncontrollable, but they are early warning signs, particularly concerning in the context of future more powerful models.

The third, Yann LeCun, disagrees.

I think this short video is a pretty good summary of the situation.

Which, to be fair, is roughly as big as ‘ifs’ come.

Maybe even aging?

Dario Amodei (Anthropic’s CEO) has written about what this could look like here (though this is obviously just one perspective).

And described nicely in this piece.

shared with my girlfriend. thanks for writing this post, george.